The Ethereum blockchain is essentially a transaction-based state machine. We begin with a blank state, before any transactions have happened on the network, and move into some final state when transactions are executed. The state of Ethereum relies on past transactions. These transactions are grouped into blocks and each block is chained together with its parent.

Transactions are processing by own Turing complete virtual machine – known as the Ethereum Virtual Machine (EVM). The EVM has its own language: EVM bytecode. Typically a programmer writes a program in a higher-level language such as Solidity. Then the program should be compiled down to EVM bytecode and commited to the Ethereum network as the new transaction. The EVM executes the transaction recursively, computing the system state and the machine state.

The EVM is included into the Ethereum node client software that verifies all transactions in each block, keeping the network secure and the data accurate. Many Ethereum clients exist, in a variety of programming languages such as Go, Rust, Java and others. They all follow a formal specification: it dictates how the Ethereum network and blockchain functions.

In this article we will consider Geth as the basic Ethereum node software.

Transaction signing problem

Every transaction must be signed before sending to Ethereum network. This signature should be recoverable and actually is needed for a few reasons: the first one is to validate the origin, and the second one — to keep the basics of blockchain: transparency and traceability. Traditionally on Ethereum networks transactions could be signed remotely on the nodes with enabled authentication and locally at the application level with some black-box magic.

The first problem for the beginners (and not only) is that most Ethereum gateways (such as Infura, Alchemy, Zmok and others) do not support authentication on their nodes due to security reasons. So, you have to run your own node or sign transactions locally.

The second problem: there’s no clear and efficient cross-language interface for Ethereum signatures management. Well, you have use some things in Python, some in JavaScript and obviously low level implementations in C or Go.

In this article I would like to pass these tricky checkpoints with the explanations and examples and introduce fast Ethereum signing application in (almost pure) Raku.

Signing node: the prototype

The remote signing node prototype was considered during Multi-network Ethereum dApp in Raku talk at The 1st Raku Conference 2021. The idea is to use the node pair per application: target node in private or public Ethereum network and local node running in docker just for transaction signing.

We should set up the mocked/shared account at local signing node: the account with the same private key and obviously address as we use for sending transactions to target node.

To set up the mocked/shared account we need to get the private key for origin account. A lot of account managers (like MetaMask) allow to export private key. Since the private key is exported you should generate keyfile and copy it to your keystore folder. A new account will be imported on the fly.

On the other hand you can add new account with given private key via JSON RPC HTTP API — just post the next request to your Geth driven local signing node running at port 8541:

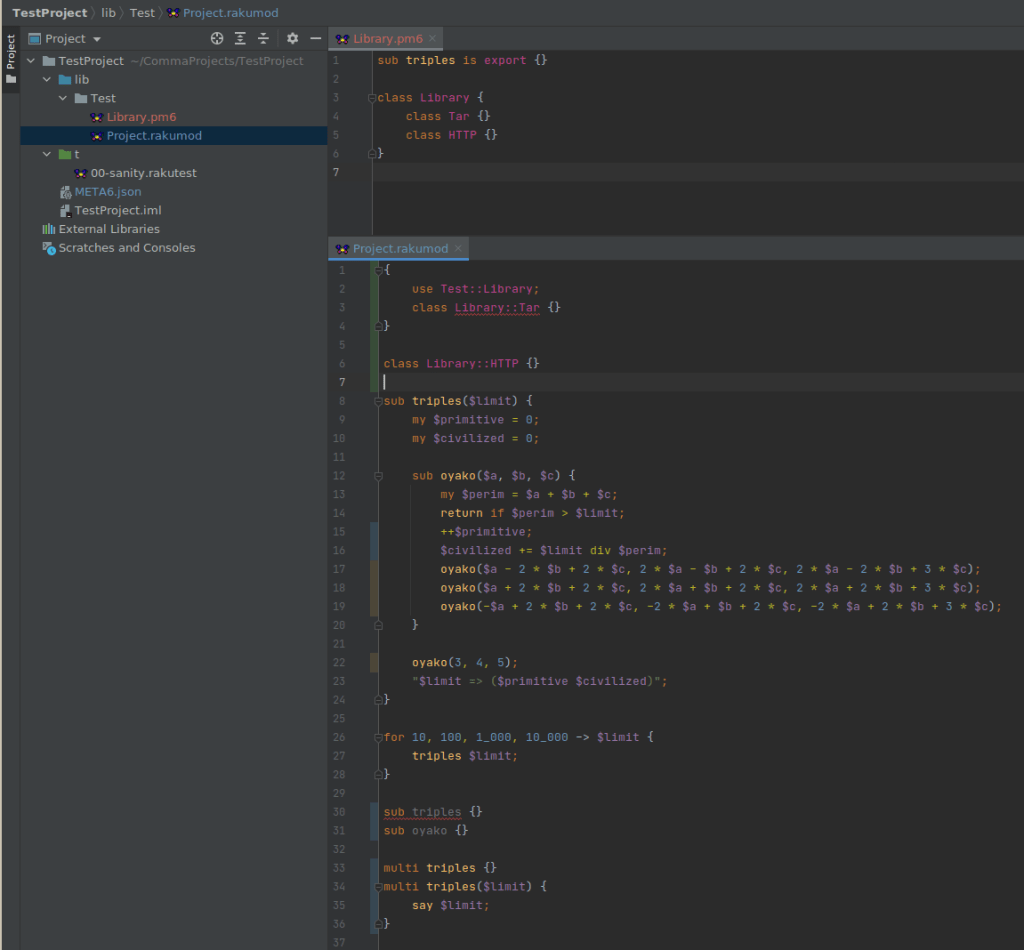

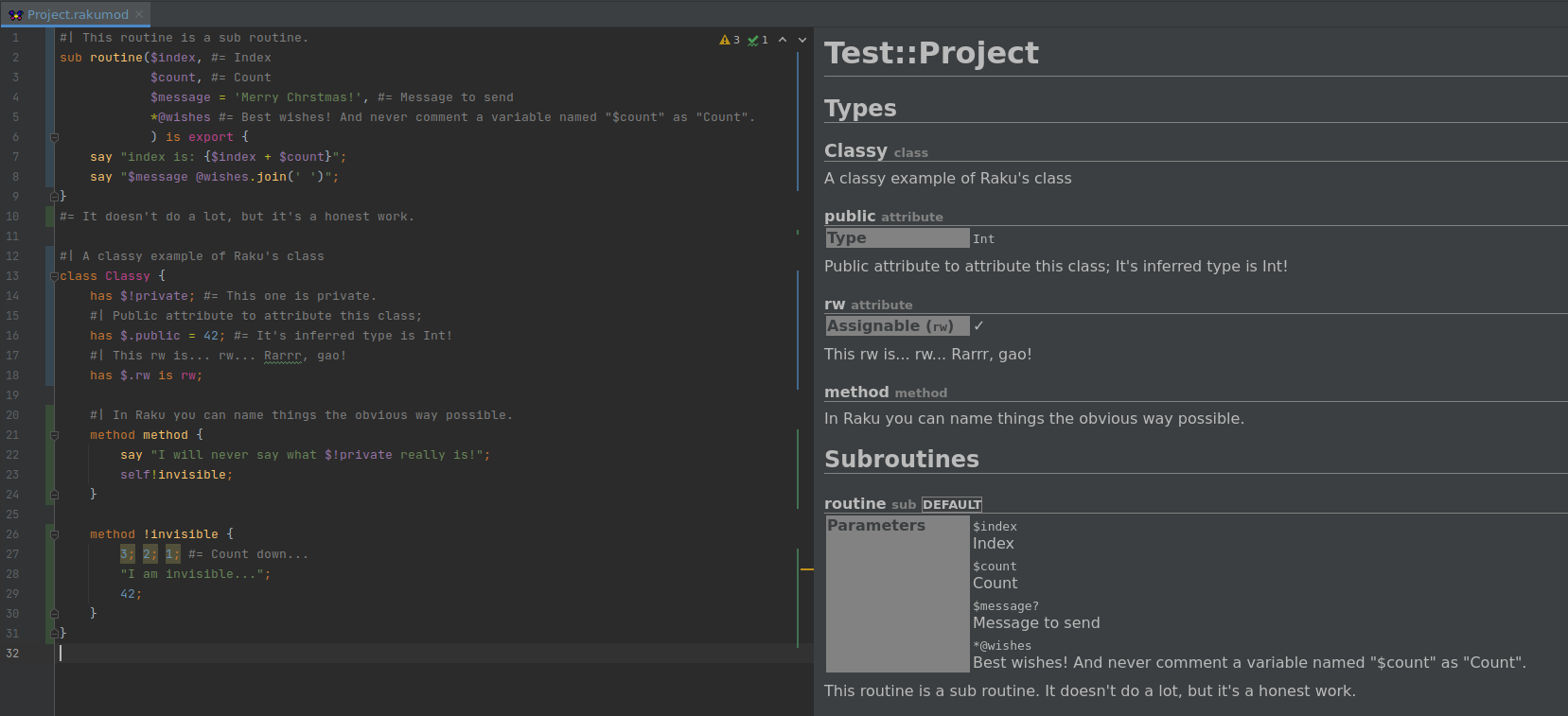

Since the local signing node is set up and running, we can try to sign a few transactions from a Raku application. The generic tool is Net::Ethereum module — Raku’s interface for interacting with the Ethereum blockchain via JSON RPC API. This is the short code snippet for Ethereum transaction signing in Raku:

You can dive deeply:

Pheix::Controller::Blockchain::Signer — naive signer;Pheix::Model::Database::Blockchain::SendTx — smart signer;Net::Ethereum — signing unit tests.

Pheix CMS uses Pheix::Model::Database::Blockchain::SendTx as the default signing module. The full integration test on Rinkeby test network with local signing node in docker container runs about 2½ hours.

Make it possible to sign transactions locally

Obviously Ethereum transaction could be signed manually. We need the next tools to make it possible: rlp, Secp256k1 and Keccak-256. Finally, when transaction is successfully signed we have to send sendRawTransaction request to the target Ethereum node.

Recursive Length Prefix (RLP)

I have started with recursive Length Prefix (RLP). The purpose of RLP is to encode arbitrarily nested arrays of binary data, and RLP is the main encoding method used to serialize objects in Ethereum. It looks trivial and ready for direct porting to Raku.

Well, Node::Ethereum::RLP module was implemented: it delivers rlp_encode and rlp_decode methods in pure Raku. The usage is quite straight-forward:

The direction of Node::Ethereum::RLP improving — to extend unit test suite. You can check brilliant paper «Ethereum’s Recursive Length Prefix in ACL2» by Alessandro Coglio about RLP, and see that there are a few non-trivial cases to be covered by module tests.

ECDSA (Secp256k1)

It was a little bit weird to figure out that Ethereum uses cryptography engine for signatures and keys management from Bitcoin. Not the own fork with any mods or any specific improvements, no — it’s totally borrowed “as is”. Anyway, it’s even better.

The path is clear: we need the Raku binding to Bitcoin’s Secp256k1 library: optimized C library for ECDSA signatures and secret/public key operations on elliptic curve secp256k1.

Usage

So, the next stop is Bitcoin::Core::Secp256k1 module. It has bindings to generic and recoverable APIs. In context of Ethereum we have to use recoverable ones, cause of explicit recovery_param (parity of y coordinate on ecliptic curve) and ChainID usage in signature. Synopsis:

Some implementation details

The implementation was much more complicated against Node::Ethereum::RLP. The most tricky things were (and are) the pointers to CStructs. If you will go through Secp256k1 C library headers, you will notice — just pointers to structs are moving between the functions. Since the Raku does not allocate memory for typed pointers, we need some manual magic.

Consider Secp256k1 ECDSA signature struct in Raku:

Implementation bellow was buggy and crashes from run to run with segfaults:

But this one works perfect (just allocated 64 bytes for data member):

So any details are very welcome and any explanations are highly appreciated, let’s discuss it in comments.

Keccak-256

Keccak is a family of sponge functions — the sponge function takes an input of any length and produces an output of any desired length — developed by the Keccak team and was selected as the winner of the the SHA-3 National Institute of Standards and Technology (NIST) competition. When published, NIST adopted the Keccak algorithm in its entirety, but modified the padding message by one byte. These two variants will have different values for their outputs, but both are equally secure. SHA-3 is often used interchangeably to refer to SHA-3 and Keccak. Ethereum was finalized with Keccak before SHA-3.

We are actually unable to use SHA-3 from Gcrypt module, because it gives an absolutely different hash.

And finally we have the third module Node::Ethereum::Keccak256::Native. This module is inspired by Digest::SHA1::Native and also has some magic in pointers as we discussed above. C implementation was taken from Firefly DIY hardware wallet project, by the way, there is original Keccak-256 from SHA-3 submission.

To be honest we can fetch keccak-256 hashes from Ethereum node. But you should convert your message to hex before the request:

As you see keccak-256 via NativeCall is ~25x faster against keccak-256 via RPC to local Ethereum node. I guess it could be x100 or even more speed up against public nodes.

Run the prototype

Let’s go back to Signing node: the prototype section and figure out what’s happening under the hood of the eth_signTransaction method from Net::Ethereum module:

Net::Ethereum is creating the transaction object with all fields in hex;Net::Ethereum is packing and sending the request to the signing node;- Then magic on signing node happens.

And let’s do this once again locally in Raku — with full explanation what kind of magic Geth node hides while signing.

Retrieve signature from Geth endpoint

First let’s run local-signer.raku script and save the signature from Geth to ETHEREUM_SIGNATURE env variable:

Calculate signature locally

Consider local-signer.raku script: there are a few constants on the top, then trivial fetching logic with Net::Ethereum comes.

First, let’s remove Geth endpoint from Net::Ethereum object initialization, to be sure — we are fully local, and create Node::Ethereum::RLP object:

Then let’s add a few constants more and create the transaction object to be signed:

Now let’s convert transaction object to array of buffers @raw with chainid and 2 blanks in the end: (nonce, gasprice, startgas, to, value, data, chainid, 0, 0), as it’s required at EIP-155:

Well, let’s get RLP of @raw and then get Keccak-256 hash from it:

It’s time to sign the $hash, here we go:

$serialized is the Hash, where the member signature is 64 bytes long and first 32 bytes are R value and others are S value. In some cases we have leading zero bytes (0x00) there, so we should sanitize the nulls with skip_lead_nulls() helper subroutine:

Almost done, now let’s calculate Ethereum recovery parameter according the recovery bit from the serialized signature, see EIP-155 reference again:

Just patch the @raw: remove some data required for Keccak-256 hashing (did you remember chainid and 2 zeros in the end?) and add R, S and recover parameter values:

Yep! Let’s do the final steps: get RLP from updated @raw and validate the signature:

Full source code: raku-signer.raku, try it out:

Also you can find more interesting examples at this repository: https://gitlab.com/pheix-research/manual-ethereum-transaction-signer.

Conclusion

One of the main advantages of local signer node — the ability to inherit authentication and signing features from node software. If your request could be authenticated on signer node, you can easily add mocked/share accounts, sign and commit transactions with no any headache.

Obvious disadvantage — maintenance, configuration, update and health monitoring. There also is the economic reason: standalone node requires sufficient resources like memory and disk space. So, you should check out advanced VPS plan for this task. If you try to use own physical server it will impose additional financial and organizational costs.

From this perspective dApp with self-signing options is the best solution. By the way, I should mention a few more valuable features. I guest the important one is the quick on-boarding — just register your free endpoint at one the external Ethereum providers (Infura, Alchemy, Zmok and others) and start the development of your dApp in Raku.

The next — flexibility while the external Ethereum providers usage: JSON RPC API stacks ary varying from one provider to another. For example, zmok.io is the fastest one, but does not provide web3_sha3 API call. Now it’s not the problem as we have Node::Ethereum::Keccak256::Native in place at Net::Ethereum.

Finally, let’s discuss the performance. We create a lot of additional HTTP/HTTPS requests while we are using the standalone node for signing. As it was demonstrated at Keccak-256 section — just the migration to Node::Ethereum::Keccak256::Native can bring x25 boost.

All sources considered in this article are available here, Merry Christmas!