Since the early Pheix versions, I have paid a lot of attention to testing system. Initially it was a set of unit tests – I tried to cover a huge range of units like classes, methods, subroutines and conditions. In some cases I have combined unit and functional testing within one .t file, like it’s done to verify Ethereum or API related functionality.

Tests became a bit complicated and environment dependent. For example off chain testing like trivial prove6 -Ilib ./t should skip any Ethereum tests including some API units, but not API template engine or cross module API communications. So I had to create environment dependent configurations and since that point I started yet another Pheix friendly test system.

It was written in pure bash and was included in Pheix repository for a few years.

In a middle of June 2022, I introduced Coveralls support and got a few requests to publish this test tool separately from Pheix. Consider that moment as a Trove module birth ![]()

Contributions are greatly appreciated: https://github.com/pheix/raku-trove.

Concepts

Generally Trove is based on idea to create yet another prove6 application: the wrapper over the unit tests in t folder. But with out-of-the-box Github and Gitlab CI/CD integration, extended logging and test-dependable options.

Trove includes trove-cli script as a primary worker for batch testing. It iterates over pre-configured stages and runs specific unit test linked to the stage. trove-cli is console oriented – all output is printed to STDOUT and STDERR data streams. Input is taken from command line arguments and configuration file.

Command line arguments

Colors

To bring colors to the output -c option is used:

trove-cli -c --f=`pwd`/run-tests.conf.yml --p=yq

By default this feature is switched off – actually colors are good at manual tests. But since you use runner on GitLab, activated colors could break the coverage collection. Gitlab parses output with the predefined regular expression and if colors are switched on, this feature breaks: colors for text are represented by color codes and these codes somehow impact coverage parsing.

Stages management

To exclude specific stages from test -s option is used:

trove-cli -c --s=1,2,4,9,10,11,12,13,14,25,26 --f=`pwd`/run-tests.conf.yml --p=yq

File processor configuration

trove-cli takes test scenario from configuration file. Default format is JSON, but you can use YAML on demand, for now JSON::Fast and YAMLish processing modules (processors) are integrated. To switch between the processors the next command line options should be used:

Versions consistency

To verify the version consistency on commit, the next command line options should be used:

-g– path to git repo with version at latest commit in format%0d.%0d.%0d;-v– current version to commit (in format%0d.%0d.%0das well).

trove-cli -c --g=~/git/raku-foo-bar --v=1.0.0

At Pheix test suite trove-cli pushes versions defined by -g and -v options to ./t/11-version.t test. The next criteria are verified there: version at latest commit from repo by -g path is lower than -v version by 1 (at one of major, minor or patch members) and -v version equals the version defined at Pheix::Model::Version.

You can try it on v0.13.116:

trove-cli -c --f=`pwd`/run-tests.conf.yml --p=yq --g=`pwd` --v=0.13.117 ... # Failed test 'curr git commit ver {0.13.117} and Version.pm {0.13.116} must be equal' # at ./t/11-version.t line 25 # Failed test 'prev git commit ver {0.13.116} and Version.pm {0.13.116} must differ by 1.0.0 || x.1.0 || x.x.1' # at ./t/11-version.t line 39 # You failed 2 tests of 6 # Failed test 'Check version' # at ./t/11-version.t line 21 # You failed 1 test of 1 13. Testing ./t/11-version.t [ FAIL ] [ error at stage 13 ]

Version consistency check is used at commit-msg helper to verify the version given by committer in commit message:

commit 5d867e4e15928ef7a98f07c8753033339aa5cf7f Author: Konstantin Narkhov Date: Sun Dec 4 17:16:07 2022 +0300 [ 0.13.116 ] Set Trove as default test suite 1. Use Trove in commit-msg hook 2. Set Trove as default test suite

Target configuration file

By default the next configuration targets are used:

- JSON –

./x/trove-configs/test.conf.json; - YAML –

./x/trove-configs/test.conf.yaml.

These paths are used to test Trove itself with:

cd ~/git/raku-trove && bin/trove-cli -c && bin/trove-cli -c --p=yq

You have to specify another configuration file via -f option:

trove-cli --f=/tmp/custom.jq.conf

First stage logging policy

trove-cli is obviously used to test Pheix. First Pheix testing stage checks www/user.rakumod script with:

raku $WWW/user.raku --mode=test # WWW == './www'

This command prints nothing to standard output and eventually nothing is needed to be saved to log file. By default first stage output is ignored. But if you use Trove to test some other module or application, it might be handy to force save first stage output. This is done by -l command line argument:

trove-cli --f=/tmp/custom.jq.conf -l

In case the stage with blank output is not skipped it’s taken into coverage scope but marked as WARN in trove-cli output:

01. Testing ./www/user.raku [ WARN ] 02. Testing ./t/cgi/cgi_post_test.sh [ 6% covered ] ...

Origin repository

By default origin repository is set up to git@github.com:pheix/raku-trove.git and you can change it to any value you prefer by -o argument:

trove-cli --f=/tmp/custom.jq.conf --o=git@gitlab.com:pheix/net-ethereum-perl6.git

It might be handy for displaying git related details about your project at Coveralls.

Configurations

Trivial test configuration example

Trivial multi-interpreter one-liner test configuration file is included to Trove:

target: Trivial one-liner test stages: - test: raku -eok(1); -MTest - test: perl6 -eis($CONSTANT,2); -MTest args: - CONSTANT - test: perl -eok(3);done_testing; -MTest::More

Test command to be executed:

CONSTANT=2 && trove-cli --f=/home/pheix/pool/core-perl6/run-tests.conf.yml.oneliner --p=yq -c

Command output messages:

01. Testing -eok(1,'true'); [ 33% covered ] 02. Testing -eis(2,2,'2=2'); [ 66% covered ] 03. Testing -eok(3,'perl5');done_testing; [ 100% covered ] Skip send report to coveralls.io: CI/CD identifier is missed

Pheix test suite configuration files

Pheix test suite configuration files have a full set of features we talked above: stages, subtages, environmental variables export, setup and clean up. These files (JSON, YAML) could be used as basic examples to create test configuration for yet another module or application, no matter – Raku, Perl or something else.

Sample snippet from run-tests.conf.yml:

target: Pheix test suite stages: - test: 'raku $WWW/user.raku --mode=test' args: - WWW - test: ./t/cgi/cgi_post_test.sh substages: - test: raku ./t/00-november.t ... - test: 'raku ./t/11-version.t $GITVER $CURRVER' args: - GITVER - CURRVER ... - test: raku ./t/17-headers-proto-sn.t environment: - export SERVER_NAME=https://foo.bar cleanup: - unset SERVER_NAME substages: - test: raku ./t/17-headers-proto-sn.t environment: - export SERVER_NAME=//foo.bar/ cleanup: - unset SERVER_NAME - test: raku ./t/18-headers-proto.t substages: - test: raku ./t/18-headers-proto.t environment: - export HTTP_REFERER=https://foo.bar cleanup: - unset HTTP_REFERER ... - test: raku ./t/29-deploy-smart-contract.t

Test coverage management

Gitlab

Coverage percentage in Gitlab is retrieved from job’s standard output: while your tests are running, you have to print actual test progress in percents to console (STDOUT). Output log is parsed by runner on job finish, the matching patterns should be set up in .gitlab-ci.yml – CI/CD configuration file.

Consider trivial test configuration example from the section above, the standard output is:

01. Running -eok(1,'true'); [ 33% covered ] 02. Running -eis(2,2,'2=2'); [ 66% covered ] 03. Running -eok(3,'perl5');done_testing; [ 100% covered ]

Matching pattern in .gitlab-ci.yml is set up:

... trivial-test: stage: trivial-test-stable coverage: '/(\d+)% covered/' ...

To test your matching pattern with Perl one-liner, save your runner’s standard output to file, e.g. /tmp/coverage.txt and run a command:

perl -lne 'print $1 if $_ =~ /(\d+)% covered/' <<< cat /tmp/coverage.txt

You will get:

33 66 100

The highest (last) value will be used by Gitlab as the test coverage value in percents for your test. Example with the 100% coverage results for Pheix:

Coveralls

Basics

Coveralls is a web service that allows users to track the code coverage of their application over time in order to optimize the effectiveness of their unit tests. Trove includes Coveralls integration via API.

API reference is quite clear – the generic objects are job and source_file. Array of source files should be included to the job:

{ "service_job_id": "1234567890", "service_name: "Trove::Coveralls", "source_files": [ { "name": "foo.raku", "source_digest": "3d2252fe32ac75568ea9fcc5b982f4a574d1ceee75f7ac0dfc3435afb3cfdd14", "coverage": [null, 1, null] }, { "name": "bar.raku", "source_digest": "b2a00a5bf5afba881bf98cc992065e70810fb7856ee19f0cfb4109ae7b109f3f", "coverage": [null, 1, 4, null] } ] }

In example above we covered foo.raku and bar.raku by our tests. File foo.raku has 3 lines of source code and only line no.2 is covered. File bar.raku has 4 lines of source code, lines no.2 and no.3 are covered, 2nd just once, 3rd – four times.

Test suite integration

Coverage concept

The basic idea behind Trove and Coveralls integration is that we do not cover lines of source files. We assume that unit test is a black box and it covers all target functionality – if unit test run is successful, we mark some part of our software as covered, otherwise – this part is out of order.

Number of unit tests should be equal to the parts of the testing software and in case of all successful tests, we mark the whole software as tested and all source code as covered.

Of course, this concept has a bottleneck. Since the unit test is considered as a black box, we can not guarantee its quality at all. In worst case it could be just a stub, with no any test logic behind.

From other hand, TAP concept does not require line-by-line testing – maintainer decides how much tests should be developed to cover the software functionality. And of course, we do not expect blank or non-functional unit tests – all of them should really work and if we can not cover some complicated logic/algorithm by single unit test, we should use a few separated ones.

Integration details

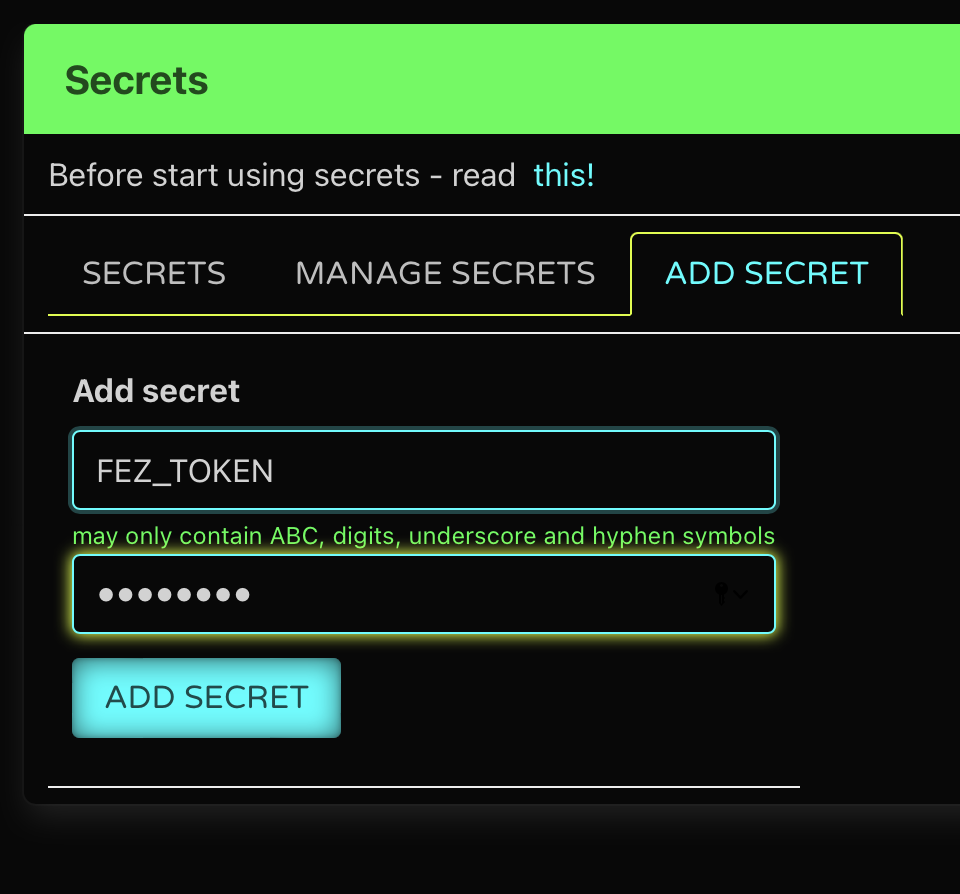

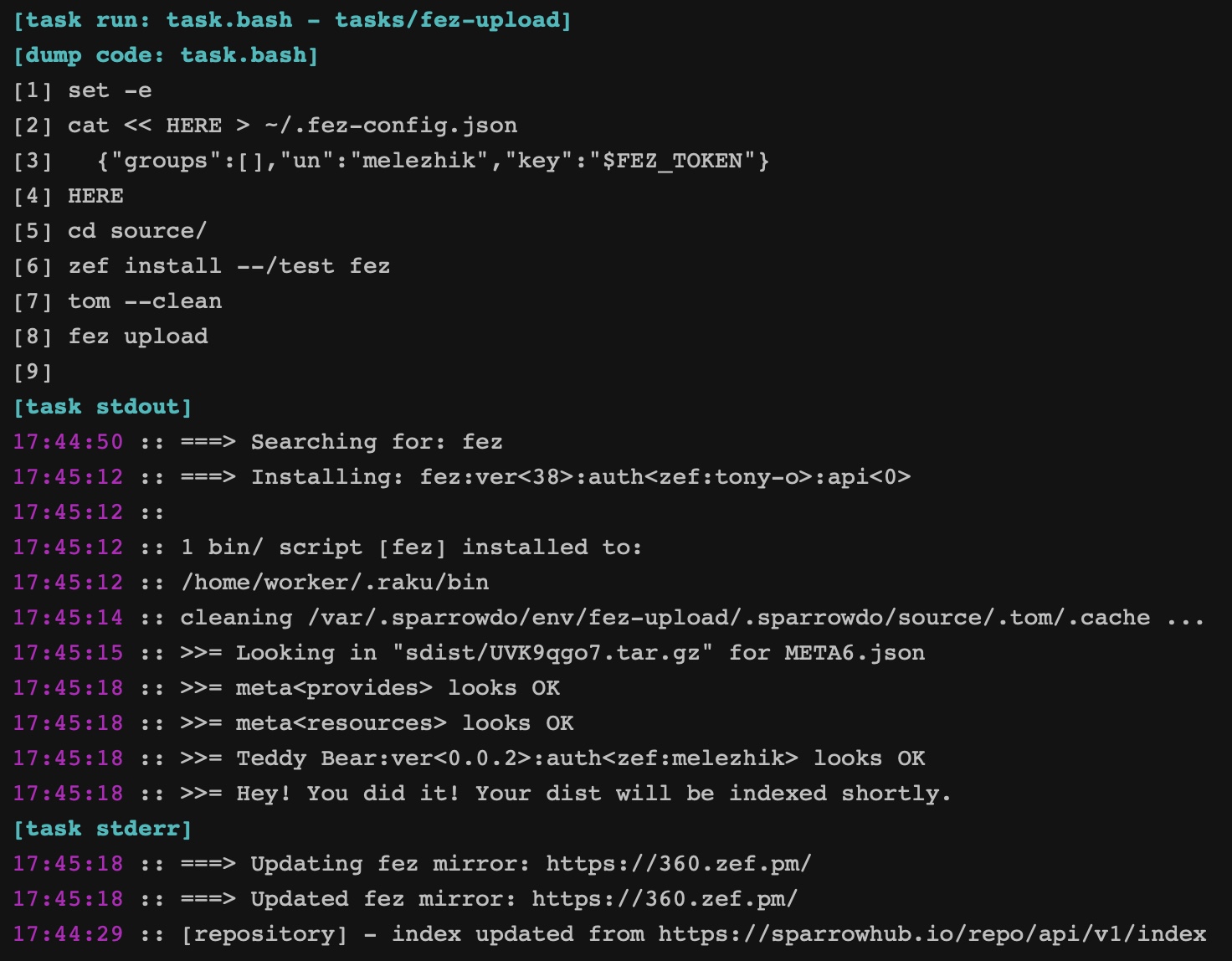

We use secret token to request Coveralls via API. Since the Gitlab runner is used for testing, secret token is stored as protected and masked variable.

As it was described in coverage concept section, we assume full coverage for some software part if its unit test is passed. Obviously this part is presented by its unit tests and source_files section in Coveralls request looks like:

... "source_files": [ { "name": "./t/01.t", "source_digest": "be4b2d7decf802cbd3c1bd399c03982dcca074104197426c34181266fde7d942", "coverage": [ 1 ] }, { "name": "./t/02.t", "source_digest": "2d8cecc2fc198220e985eed304962961b28a1ac2b83640e09c280eaac801b4cd", "coverage": [ 1 ] } ] ...

We consider no lines to be covered, so it’s enough to set [ 1 ] to coverage member.

Besides source_files member we have to set up a git member as well. It’s pointed as optional, but your build reports on Coveralls side will look anonymous without git details (commit, branch, message, etc…).

You can check how Coveralls integration is done at the Trove::Coveralls module: https://github.com/pheix/raku-trove/blob/main/lib/Trove/Coveralls.rakumod.

Нow it looks on Сoveralls side

Project overview

Unit tests summary

Recent builds

Log test session

While testing trove-cli does not print any TAP messages to standard output. Consider trivial multi-interpreter one-liner test again:

01. Running -eok(1,'true'); [ 33% covered ] 02. Running -eis(2,2,'2=2'); [ 66% covered ] 03. Running -eok(3,'perl5');done_testing; [ 100% covered ]

On the background trove-cli saves the full log with extended test details. Log file is saved to current (work) directory and has the next file name format: testreport.*.log, where * is test run date, for example: testreport.2022-10-18_23-21-12.log.

Test command to be executed:

cd ~/git/raku-trove && CONSTANT=2 bin/trove-cli --f=`pwd`/x/trove-configs/tests.conf.yml.oneliner --p=yq -c -l

Log file testreport.*.log content is:

----------- STAGE no.1 ----------- ok 1 - true ----------- STAGE no.2 ----------- ok 1 - 2=2 ----------- STAGE no.3 ----------- ok 1 - perl5 1..1

Usage for any module or application

Honestly we can use trove-cli to test any software, but obviously it fits much more closely to Raku or Perl modules and applications.

Let’s try Trove with:

Acme::Insult::Lala: Raku module by @jonathanstoweAcme: Perl module by @INGY

Acme::Insult::Lala

trove-cli is available as independent module, the first step is to install it.

zef install Trove

Next step is to clone Acme::Insult::Lala to /tmp:

cd /tmp && git clone https://github.com/jonathanstowe/Acme-Insult-Lala.git

Now we have to create Trove configuration file for Acme::Insult::Lala module. Let’s check how many unit tests this module has:

ls -la /tmp/Acme-Insult-Lala/t # drwxr-xr-x 2 kostas kostas 4096 Oct 23 14:56 . # drwxr-xr-x 7 kostas kostas 4096 Oct 23 15:19 .. # -rw-r--r-- 1 kostas kostas 517 Oct 23 14:56 001-meta.t # -rw-r--r-- 1 kostas kostas 394 Oct 23 14:56 010-basic.t

Just 001-meta.t and 010-basic.t, so the configuration file should contain:

target: Acme::Insult::Lala stages: - test: raku /tmp/Acme-Insult-Lala/t/001-meta.t - test: raku /tmp/Acme-Insult-Lala/t/010-basic.t

Save it to /tmp/Acme-Insult-Lala/.run-tests.conf.yml and run the test:

RAKULIB=lib trove-cli --f=/tmp/Acme-Insult-Lala/.run-tests.conf.yml --p=yq -l -c

Command output messages:

01. Testing /tmp/Acme-Insult-Lala/t/001-meta.t [ 50% covered ] 02. Testing /tmp/Acme-Insult-Lala/t/010-basic.t [ 100% covered ] Skip send report to coveralls.io: CI/CD identifier is missed

Log file content:

----------- STAGE no.1 ----------- 1..1 # Subtest: Project META file is good ok 1 - have a META file ok 2 - META parses okay ok 3 - have all required entries ok 4 - 'provides' looks sane ok 5 - Optional 'authors' and not 'author' ok 6 - License is correct ok 7 - name has a '::' rather than a hyphen (if this is intentional please pass :relaxed-name to meta-ok) ok 8 - no 'v' in version strings (meta-version greater than 0) ok 9 - version is present and doesn't have an asterisk ok 10 - have usable source 1..10 ok 1 - Project META file is good ----------- STAGE no.2 ----------- ok 1 - create an instance ok 2 - generate insult ok 3 - and its defined ok 4 - and 'rank beef-witted hempseed' has at least five characters ok 5 - generate insult ok 6 - and its defined ok 7 - and 'churlish rough-hewn flap-dragon' has at least five characters ok 8 - generate insult ok 9 - and its defined ok 10 - and 'sottish common-kissing pignut' has at least five characters ok 11 - generate insult ok 12 - and its defined ok 13 - and 'peevish dismal-dreaming vassal' has at least five characters ok 14 - generate insult ok 15 - and its defined ok 16 - and 'brazen bunched-backed harpy' has at least five characters ok 17 - generate insult ok 18 - and its defined ok 19 - and 'jaded crook-pated gudgeon' has at least five characters ok 20 - generate insult ok 21 - and its defined ok 22 - and 'waggish shrill-gorged manikin' has at least five characters ok 23 - generate insult ok 24 - and its defined ok 25 - and 'goatish weather-bitten horn-beast' has at least five characters ok 26 - generate insult ok 27 - and its defined ok 28 - and 'hideous beef-witted maggot-pie' has at least five characters ok 29 - generate insult ok 30 - and its defined ok 31 - and 'bootless earth-vexing giglet' has at least five characters 1..31

All updates are in my forked repo: https://github.com/pheix/Acme-Insult-Lala.

Acme

Consider that Trove was successfully installed. Now you have to download and unzip Acme to /tmp/Acme-perl5.

Next steps are equal to those ones we did for Acme::Insult::Lala:

- check the unit tests for

Acmemodule withls -la /tmp/Acme-perl5/t - add

Troveconfig file.run-tests.conf.ymlto/tmp/Acme-perl5

Content of .run-tests.conf.yml configuration file for Acme module:

target: Perl5 Acme v1.11111111111 stages: - test: perl /tmp/Acme-perl5/t/acme.t - test: perl /tmp/Acme-perl5/t/release-pod-syntax.t

Run the test with:

PERL5LIB=lib trove-cli --f=/tmp/Acme-perl5/.run-tests.conf.yml --p=yq -l -c

Command output messages:

01. Testing /tmp/Acme-perl5/t/acme.t [ 50% covered ] 02. Testing /tmp/Acme-perl5/t/release-pod-syntax.t [ SKIP ] Skip send report to coveralls.io: CI/CD identifier is missed

Log file content:

----------- STAGE no.1 ----------- ok 1 ok 2 ok 3 1..3 ----------- STAGE no.2 ----------- 1..0 # SKIP these tests are for release candidate testing

Try these updates with my forked repo: https://gitlab.com/pheix-research/perl-acme/.

Integration with CI/CD environments

Github

Consider module Acme::Insult::Lala, to integrate Trove to Github actions CI/CD environment we have to create .github/workflows/pheix-test-suite.yml with the next instructions:

name: CI on: push: branches: [ master ] pull_request: branches: [ master ] jobs: build: runs-on: ubuntu-latest container: image: rakudo-star:latest steps: - uses: actions/checkout@v2 - name: Perform test with Pheix test suite run: | wget -qO /usr/local/bin/yq https://github.com/mikefarah/yq/releases/latest/download/yq_linux_amd64 && chmod a+x /usr/local/bin/yq zef install Trove ln -s `pwd` /tmp/Acme-Insult-Lala cd /tmp/Acme-Insult-Lala && RAKULIB=lib trove-cli --f=/tmp/Acme-Insult-Lala/.run-tests.conf.yml --p=yq -l -c cat `ls | grep "testreport"`

CI/CD magic happens at run instruction, let’s explain it line by line:

wget ...– manualyqbinary installation;zef install Trove– installTrovetest tool;ln -s ...– creating the module path consistent with.run-tests.conf.yml;cd /tmp/Acme-Insult-Lala && ...– run the tests;cat ...– print test log.

Check the job: https://github.com/pheix/Acme-Insult-Lala/actions/runs/3621090976/jobs/6104091041

Gitlab

Let’s integrate module perl5 module Acme with Trove to Gitlab CI/CD environment – we have to create .gitlab-ci.yml with the next instructions:

image: rakudo-star:latest before_script: - apt update && apt -y install libspiffy-perl - wget -qO /usr/local/bin/yq https://github.com/mikefarah/yq/releases/latest/download/yq_linux_amd64 && chmod a+x /usr/local/bin/yq - zef install Trove - ln -s `pwd` /tmp/Acme-perl5 test: script: - cd /tmp/Acme-perl5 && PERL5LIB=lib trove-cli --f=/tmp/Acme-perl5/.run-tests.conf.yml --p=yq -l -c: - cat `ls | grep "testreport"`: only: - main

On Gitlab CI/CD magic happens in before_script and test/script instructions. Behavior is exactly the same as it was in run instruction for Github action.

Check the job: https://gitlab.com/pheix-research/perl-acme/-/jobs/3424335705

Perspectives: integrate subtest results to coverage

How it works now

As described above we do not cover lines of source files. We assume that unit test covers all target functionality – if unit test run is successful, we mark it 100% covered, otherwise – failed: 0%. Roughly speaking, in perspective of Coveralls source coverage – each source file to be covered is minimized to huge one-liner:

{ "name": "module.rakumod", "source_digest": "8d266061dcae5751eda97450679d6c69ce3dd5aa0a2936e954af552670853aa9", "coverage": [ 1 ] }

Subtests

Mostly unit tests have subtests inside. The perspective is to use subtest results as additional coverage “lines”. Consider a unit test with a few subtests under the hood:

use v6.d; use Test; plan 3; subtest {ok(1,'true');}, 'subtest no.1'; subtest {ok(2,'true');}, 'subtest no.2'; subtest {ok(3,'true');}, 'subtest no.3'; done-testing;

Coveralls coverage will be:

{ "name": "trivial.t", "source_digest": "d77f2fa9b43f7229baa326cc6fa99ed0ef6e1ddd56410d1539b6ade5d41cb09f", "coverage": [1, 1, 1] }

And if one of the sub tests will fail, we will get 66% coverage in summary, instead of 0% for now.

Afterword

Bash vs Raku

Actually the Trove‘s avatar – Pheix test tool run-tests.bash bash script is still available and could be used with exactly the same functionality as Trove has. Obviously run-tests.bash has a few bash related advantages:

- cross platform: bash is everywhere in Linux world;

- maintenance: bash is universal and scripts in bash are considering as logical platform for automation and testing, I can imagine – from perspective of Python developer, it’s okay to use bash-written test tool, but it’s suspicious to use the same system in Raku, cause of language specifics.

run-tests.bash works with external processors for configuration file parsing — JSON processor jq (widely presented in different Linux distros) and YAML processor yq (probably a hacker/geek’s tool).

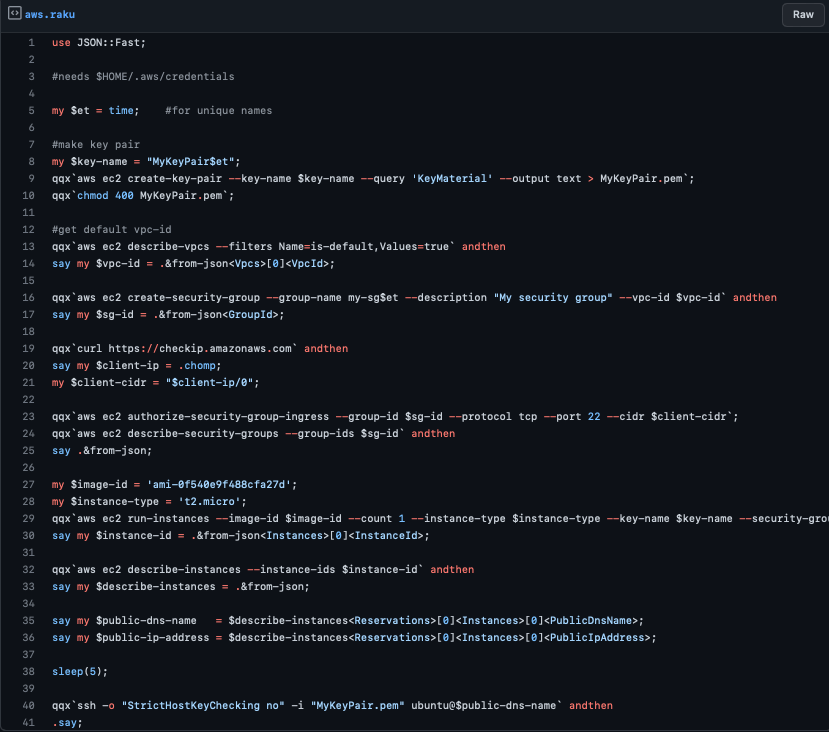

I have a project in C and use run-tests.bash as a default test tool. This project is hosted by GitLab and has trivial CI/CD configuration:

test-io-database: coverage: '/(\d+)% covered/' before_script: ... - wget -qO /usr/local/bin/yq https://github.com/mikefarah/yq/releases/latest/download/yq_linux_amd64 && chmod a+x /usr/local/bin/yq - git clone https://gitlab.com/pheix-pool/core-perl6.git /pheix - ln -sf /pheix/run-tests.bash run-tests.bash script: ... - bash run-tests.bash -f .run-tests.conf.yml -p yq -l -c after_script: - cat `ls | grep "testreport"` artifacts: paths: - $CI_PROJECT_DIR/testreport.* when: always expire_in: 1 year only: - master - devel - merge_requests

I skipped project specific actions by ..., but you can check full .gitlab-ci.yml here. The pipeline’s output is:

... $ bash run-tests.bash -f .run-tests.conf.yml -p yq -l -c Colors in output are switch on! Config processor yq is used Skip delete of ./lib/.precomp folder: not existed 01. Running ./debug/test-tags [ 25% covered ] 02. Running ./debug/test-statuses [ 50% covered ] 03. Running ./debug/test-events [ 75% covered ] 04. Running ./debug/test-bldtab [ 100% covered ] Skip send report to coveralls.io: repository token is missed ...

Job output is logged to testreport.2022-12-07_16-36-16.log file and it’s available in job’s artifacts. Coverage was collected and used on project’s badge:

Performance

The last thing I would like to mention is the performance. Actually Trove is ~5% faster than the bash avatar on Pheix test suite and almost equal to prove6:

rm -rf .precomp lib/.precomp/ && time bash -c "bash run-tests.bash -c" ... # real 1m15.644s # user 1m44.014s # sys 0m7.885s rm -rf .precomp lib/.precomp/ && time trove-cli -c --f=`pwd`/run-tests.conf.yml --p=yq ... # real 1m11.679s # user 1m39.849s # sys 0m8.060s rm -rf .precomp lib/.precomp/ && time prove6 t ... # real 1m10.110s # user 1m38.654s # sys 0m7.643s

And finally I was very surprised with Perl prove utility — an old, true 🇨🇭 chainsaw:

rm -rf .precomp lib/.precomp/ && time prove -e 'raku -Ilib' ... # real 0m57.986s # user 1m19.779s # sys 0m6.465s

That’s all!

Christmas eve is a nice time to use Trove or its avatar in bash — enjoy them!